In the Build phase of the Agentic Shift, we followed the "Lobster Way." Using OpenClaw, we built a hard shell of control around our AI. We wrote custom scripts, focused on learning quickly, and kept our initial costs as low as possible. It was an exciting time. We treated the AI like a powerful tool we were trying to master. But as your startup moves into the Measure phase, the excitement of building must meet the reality of running a business.

The goal has changed. You are no longer asking if the technology can perform a cool trick for a demo. You are now asking a much harder question: Is this technology strong enough to build a real business on? In the Lean Startup cycle, this is the moment of truth. This is where you stop looking at "vanity metrics"—numbers that make you look good but don't mean anything—and start looking at "validated learning" that actually tells you if you are succeeding.

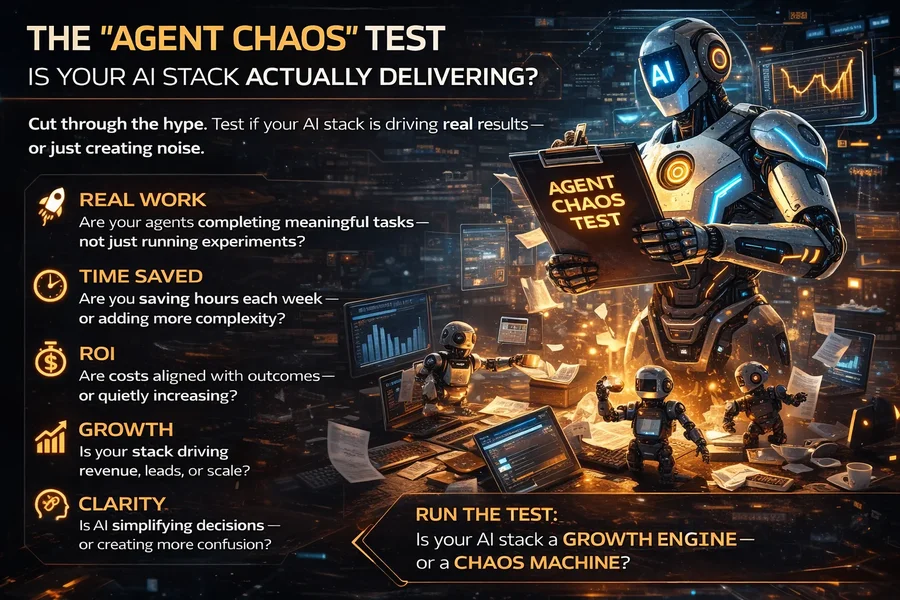

The 2026 Crisis: Welcome to "Agent Chaos"

In 2026, we have entered a period that experts call "Agent Chaos." Because it has become so easy to launch AI agents, many startups now have dozens of them running at once. One agent handles customer emails, another researches competitors, and a third updates the website code. On the surface, it looks like the company is incredibly productive. But underneath, there is often a mess.

Founders often get distracted by the "magic" of seeing an AI move a mouse or type code on a screen. They don't realize that if the AI takes three hours to do a task that a human could do in thirty minutes, the business is actually moving backward. If your agents are conflicting with each other, burning through expensive API credits, or getting stuck in infinite loops, you don't have a business—you have a technical debt factory.

To see where you truly stand, you must revisit your Learning Velocity (VL). In the Build phase, VL was about how fast you could create. In the Measure phase, it is about how stable and efficient your operations have become. We use this formula to keep us honest:

Learning Velocity (VL) = (Total Finished AI Tasks) / [Time x (AI Fees + Building Costs)]

A high $V_L$ in Phase 2 means you are getting more work done for every dollar you spend. If this number is going down, you have likely fallen into the DIY Trap. This happens when you spend so much time fixing your own custom tools (like your OpenClaw setup) that you have stopped building your actual product. In the 2026 market, your competitors are moving faster by using platforms like Manus AI, Perplexity Computer, and Claude Cowork. These platforms handle the "plumbing," allowing founders to focus on the value.

The Metric Shift: From "Cool" to "Critical"

When your tool moves from a solo project to something your whole team depends on, your metrics must change. In the early days, we were happy if an AI could write a creative poem or explain a complex topic. In 2026, we only care about task completion. In a Lean Startup, "Measurement" is about how much time and money you saved. If your team still spends most of their day "babysitting" the AI and checking its work, you haven't built a helper; you've built a high-maintenance intern.

Here are the four main metrics you need to track in 2026 to decide if your build should stay local or move to a professional platform.

1. ATC: Agentic Task Completion Rate

This is the most important score in 2026. It is a simple "yes or no" measurement: Did the AI finish the job or not? We don't give partial credit. If you tell an AI to find five sales leads and save them to your database, but it finds the leads and forgets to save them, the score is 0%.

- The Benchmark: To be considered "business-ready," an agent needs an ATC score of at least 85%. This means out of 100 tasks, it finishes 85 perfectly without any human help.

- The Reality Check: If your custom OpenClaw setup is only hitting 60%, you are in the danger zone. This is usually when teams switch to Manus AI or Perplexity Computer. Because these platforms are cloud-based and highly optimized, they often have much higher "out-of-the-box" reliability.

2. MFC: The "Maintenance Friction" Score

This is where the debate between DIY (OpenClaw) and Managed Platforms (Manus/Perplexity) becomes real. MFC measures the hidden cost of "free" or custom tools. Every time an engineer has to fix a broken script, restart a crashed server, or update an API key, this score goes up. In the Lean Startup world, this is called Waste.

In 2026, "Developer Guilt" is common. Founders spend all weekend fixing a bot that only saves them 20 minutes of work on Monday. To calculate MFC, use this formula:

MFC = (Time spent fixing the AI) / (Time the AI actually worked)

If your MFC is higher than 0.2, you are losing the battle. Professional platforms like Claude Cowork eliminate this waste by providing a stable, enterprise-grade environment where the "plumbing" is guaranteed to work.

3. L2V: Latency-to-Value (The Speed Gap)

In 2026, speed is a feature. If a human can do a job in 5 minutes and your AI takes 4 minutes to "think" and process, the benefit is too small to justify the cost. This is called the Speed Gap.

Your local OpenClaw setup is limited by the power of your own computer. However, Perplexity Computer and Manus AI use massive cloud systems. They can use "sub-agent teams" to think about ten things at once. While your local AI is still reading the first page of a document, a managed cloud agent has already read the whole file, checked it against three websites, and written the final summary. If your L2V is too slow, you are not scaling; you are just waiting.

4. AV: Alignment Variance (The Trust Metric)

This metric is critical for collaborative tools like Claude Cowork. It measures how often a human has to step in to push the AI back in the right direction. We call this "steering."

If you find that your agents are "drifting"—doing the work but in a way that doesn't match your brand or your goals—you have an alignment problem. Claude Cowork uses "Shared Reasoning" to show you exactly why it is taking an action before it happens. This reduces variance and builds trust. If you have to fix the AI's work constantly after it's finished, you need to move to a collaborative workspace where you can guide it in real-time.

The "Shadow AI" Problem: A System Design Failure

In the Measure phase, many founders discover a hidden problem called Shadow AI. This happens when one person builds a custom OpenClaw agent on their laptop that nobody else on the team can see or use. Because the logs are local and the code is custom, the rest of the company has no idea how the agent is making decisions.

Research from early 2026 shows that 40% of startup AI projects fail because the "plumbing" between team members is broken. If your data is messy and your agents are working in secret, you don't have a platform—you have a collection of toys. This is why teams eventually pivot toward Manus AI or Perplexity. These tools provide central dashboards where every team member can see, measure, and audit what the agents are doing. Transparency is the only way to scale trust.

The Reality Check: DIY vs. Managed vs. Collaborative

| Metric | OpenClaw (DIY) | Manus / Perplexity | Claude Cowork |

|---|---|---|---|

| Visibility | Local logs only (Shadow AI). | Central cloud dashboard. | Visual, shared work history. |

| Success Rate | Varies by developer skill. | High (Managed infrastructure). | High (Human-guided). |

| System Repair | Manual code fixes required. | Automatic retry logic. | Asks human for permission. |

| Scalability | Limited by your hardware. | Unlimited cloud power. | Scales with team size. |

When the Data Says "Switch"

In a Lean Startup, switching directions isn't a failure—it’s a smart move based on what you learned. If your data shows that you are spending more money on developer time to fix OpenClaw than it would cost to simply subscribe to Manus AI, you have a professional responsibility to switch. This is the "Pivot or Persevere" moment of the Measure phase.

By moving to a professional platform like Perplexity Computer, you get back the hours your engineers currently spend "babysitting" scripts. This allows them to go back to what they do best: innovating and solving problems for your customers.

Steps for the Measure Phase

The Verdict for Phase 2

Measuring your work is the bridge between a "hobby project" and a "real product." In the 2026 Agentic Shift, the winners won't be the people with the coolest custom scripts. The winners will be the people who knew when to stop building tools and start building value.

If your metrics show that you have outgrown doing it yourself, don't be afraid to pivot. Whether you move to the speed of Perplexity, the ease of Manus, or the teamwork of Claude, you are doing it because your business needs to move faster. Data is better than opinions. Trust the numbers, and you will survive the shift.

The "Measure" Manifesto: In 2026, if you can't measure it, you can't manage it. Your Learning Velocity is your only shield against the competition. Don't let your "Lobster Shell" become your cage.

No comments yet

Be the first to share your thoughts on this article!